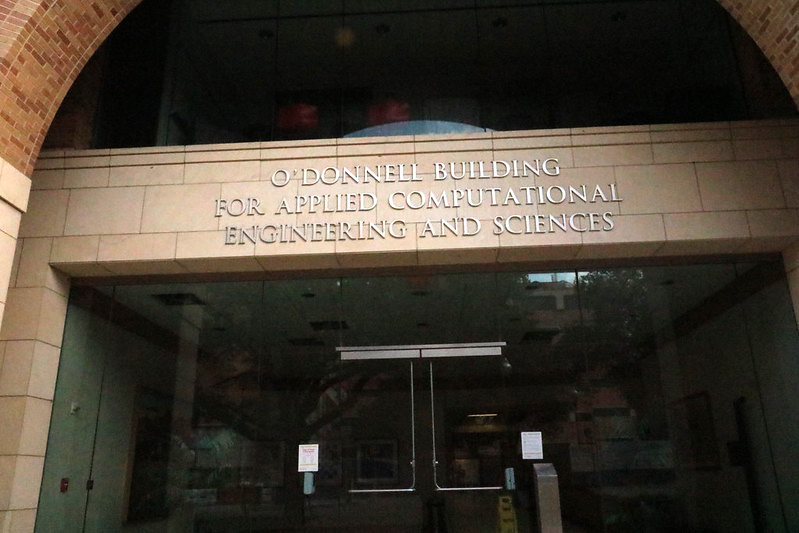

The Oden Institute has its own building, dedicated exclusively for research and graduate education in computational engineering and sciences.

Thanks to our close partnership with the Texas Advanced Computing Center (TACC), the Oden Institute community enjoys access to some of the world’s most powerful high performance computing facilities.

To reserve space, visit room reservations.

The Peter O'Donnell Building (POB) is the dedicated home on the UT campus for the Oden Institute and its multidisciplinary community. Faculty, staff and students work across the building's five floors in an environment designed to foster collaboration.

Research seminars and presentations take place every week — virtually and in-person — providing students ample opportunities to engage directly with faculty.

The Oden Institute’s partnership with Texas Advanced Computing Center (TACC) enables access to the world's leading supercomputing facilities — both in our own dedicated space in the O'Donnell Building as well as at TACC's main facility at Pickle Research Campus.

With ~100 students enrolled in our interdisciplinary PhD and Master’s programs in Computational Science, Engineering and Mathematics (CSEM), the Institute is always a lively and exciting place to be on campus.

Peace and quiet can be found in any of the beautiful and serene courtyards located in and around the Oden Institute.